Cheaha

https://docs.rc.uab.edu/

Please use the new documentation url https://docs.rc.uab.edu/ for all Research Computing documentation needs.

As a result of this move, we have deprecated use of this wiki for documentation. We are providing read-only access to the content to facilitate migration of bookmarks and to serve as an historical record. All content updates should be made at the new documentation site. The original wiki will not receive further updates.

Thank you,

The Research Computing Team

Cheaha is a campus resource dedicated to enhancing research computing productivity at UAB. Cheaha is sponsored by UAB Information Technology (UAB IT) and is available to members of the UAB community in need of increased computational capacity. Cheaha supports high-performance computing (HPC) and high-throughput computing (HTC) and is the primary interface for leveraging computational resources on UABgrid, the campus distributed research support infrastructure.

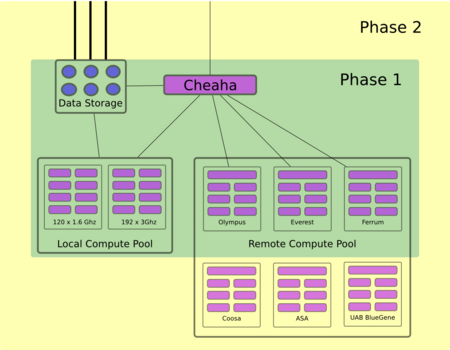

Cheaha includes a dedicated pool of local compute resources and provides seamless access to remote compute resources through the use of inter-cluster scheduling technologies. The local compute pool contains two processor banks based on the x86_64 64-bit architecture. 192 3.0Ghz cores and 120 1.6Ghz cores combine to provide nearly 3TFlops of dedicated computing power.

Use of the local compute pool is governed by scheduling policies designed to maximize availability of total capacity and ensure guaranteed access to reserved resources. Use of the remote compute pool is contingent upon allocations for individual users on specific resources. Incorporation of remote resources enables simplified management of scientific workflows and can significantly increase available compute capacity.

Cheaha is located in the UAB Shared Computing facility in BEC. Resource design and development is lead by UAB IT Infrastructure Services in open collaboration with community members. Development effort is coordinated though Cheaha's project web site. Operational support is provided by UAB School of Engineering's cluster support group.

Cheaha is named in honor of Cheaha Mountain, the highest peak in the state of Alabama. Cheaha's summit offers clear vistas of the surrounding landscape. (A Cheaha Mountain photo-stream on Flikr).

History

In 2002 UAB was awarded an infrastructure development grant through the NSF EPsCoR program. This led to the 2005 acquisition of a 64 node compute cluster with two AMD Opteron 242 1.6Ghz CPUs per node (128 total cores). This cluster was named Cheaha. Cheaha expanded the compute capacity available at UAB and was the first general-access resource for the community. It lead to expanded roles for UAB IT in research computing support through the development of the UAB Shared HPC Facility in BEC and provided further engagement in Globus-based grid computing resource development on campus via UABgrid and regionally via SURAgrid.

2008 Hardware Upgrade

In 2008, money was allocated for hardware upgrades which lead to the acquisition of an additional 192 cores based on the Intel Quad-Core E5450 3.0Ghz CPU in August of 2008. This hardware represented a major technology upgrade that included space for additional expansion to address over-all capacity demand and enable resource reservation.

This upgrade also included enhancements to enable access to the aggregate compute power available to the UAB community and improve management of compute jobs across clusters that are part of the UABgrid computing infrastructure. 10Gigabit Ethernet connectivity to the UABgrid Research Network supports high speed data transfers between clusters connected to this network, enabling efficient job staging on multiple resources. GridWay-based meta-scheduling enables management of compute jobs across cluster boundaries and brings grid-computing into production.

Continuous Resource Improvement

The 2008 upgrade began a phased development approach for Cheaha with on-going increases in capacity and feature enhancements being brought into production via an open community process. The first two phases are represented in the diagram on the right, which highlights the logical connectivity between resources. Phase 1 is scheduled for production in January 2009.

Availability

Cheaha is a general purpose computing resource made available to the UAB community by UAB IT. As such, it is available for legitimate research and educational needs and is governed by UAB's Acceptable Use Policy (AUP) for computer resources.

Many software packages commonly used across UAB are available via Cheaha. For more details and introductory help on using this resource please visit the resource details page.

To request access to Cheaha, please submit an authorization request to the School of Engineering support group.

Cheaha's intended use implies broad access to the community, however, no guarantees are made that specific computational resources will be available to all users. Availability guarantees can only be made for reserved resources. (See Cheaha#Scheduling_Framework for details).

Scheduling Framework

Enhancements to Cheaha in general, and, in particular, its scheduling framework are intended to remain transparent to the user community. From this perspective, Cheaha can be seen as an ordinary cluster which supports job management via Sun Grid Engine (SGE).

User's who have no need for or interest in maximizing access to computational resources are encouraged to continuing using the existing SGE job management system to manage compute jobs on Cheaha.

SGE

Cheaha provides access to its local compute pool via the SGE scheduler. This arrangement is identical to the existing clusters on campus and mirrors the long-established configuration of Cheaha. Cluster users experienced with other SGE-based clusters should find no difficulty leveraging this service. For more information on using SGE on Cheaha please see the cluster resources page. For user support requests with using SGE on Cheaha or any other issues related to cluster usage, please submit a support request on-line.

GridWay

Cheaha provides enhanced job management capabilities that enable the user to leverage all computing resources to which they have access. These services are provided via the GridWay, a grid-based meta-scheduling infrastructure. The scheduler operates much like SGE by providing job submit and monitoring commands. Outside of the slightly different commands and syntax a subtle difference is that an explicit (though automated) job staging step is involved in order to start jobs on multiple clusters, this can require more explicit handling of input and output files that is ordinarily required by SGE.

Additionally, GridWay cannot perform magic. If you ordinarily do not have access to other clusters or your code does not run on a targeted cluster, GridWay cannot solve these problems for you. You must ensure your codes run on all compute resources you intend to include in your scheduling pool prior to submitting jobs to those resources.

Furthermore, GridWay cannot run MPI jobs across cluster boundaries. If you simply use MPI to coordinate the workers (rather than for low-latency peer communication), you should generally be able to structure your job to work across cluster boundaries. Otherwise, additional effort may be required to divide your data into smaller work units.

Adoption of GridWay is encouraged and future compute capacity enhancements will leverage the flexibility of GridWay. The nature of this new technology implies a slight learning curve. The learning curve need not be steep, however, and direct migration of SGE scripts is possible. Additionally, all Cheaha accounts are configured to support the use of GridWay as an alternative scheduler, empowering the adventurous.

GridWay Support

The UABgrid User Community stands ready to help you with GridWay adoption. If you are interested in exploring or adapting your jobs to use GridWay please subscribe to the UABgrid-User list and ask all questions there. Please do not submit GridWay-related questions via the ordinary cluster support channels.

We will continue to develop on-line documentation to provided migration examples to help you further explore the power of GridWay.

Support

Operational support for Cheaha is provided by the School of Engineering's cluster support group. Please submit all support requests to directly via the on-line form.